what is important to remember when analyzing data in research

In our data-rich age, understanding how to analyze and extract true meaning from our concern's digital insights is ane of the primary drivers of success.

Despite the colossal book of information we create every day, a mere 0.5% is actually analyzed and used for data discovery, improvement, and intelligence. While that may not seem like much, because the amount of digital information we have at our fingertips, half a per centum notwithstanding accounts for a vast amount of data.

With so much data and so footling time, knowing how to collect, curate, organize, and brand sense of all of this potentially business-boosting data tin be a minefield – only online information analysis is the solution.

In science, data assay uses a more than complex approach with avant-garde techniques to explore and experiment with information. On the other manus, in a business context, data is used to make data-driven decisions that will enable the company to improve its overall operation. In this mail service, we will cover the analysis of data from a business bespeak of view while withal going through the scientific and statistical foundations that are central to understanding the basics of data analysis.

To put all of that into perspective, we will answer a host of important belittling questions, explore belittling methods and techniques, while demonstrating how to perform analysis in the real globe with a 17-pace blueprint for success.

What Is Data Analysis?

Information analysis is the process of collecting, modeling, and analyzing information to extract insights that support decision-making. In that location are several methods and techniques to perform analysis depending on the industry and the aim of the investigation.

All these various methods are largely based on two core areas: quantitative and qualitative research.

To explain the cardinal differences between qualitative and quantitative research, here's a video for your viewing pleasance:

Gaining a ameliorate understanding of different techniques and methods in quantitative enquiry also as qualitative insights will give your analyzing efforts a more clearly divers direction, so it's worth taking the time to allow this item knowledge to sink in. Additionally, you will be able to create a comprehensive analytical report that will skyrocket your analysis.

Why Is Information Analysis Important?

Before nosotros go into detail well-nigh the categories of analysis along with its methods and techniques, you must empathize the potential that analyzing data can bring to your organisation.

- Informed decision-making: From a direction perspective, you lot tin benefit from analyzing your information equally it helps you brand decisions based on facts and non simple intuition. For example, you can understand where to invest your capital, detect growth opportunities, predict your incomes, or tackle uncommon situations earlier they become problems. Like this, you tin can extract relevant insights from all areas in your system, and with the help of dashboard software, nowadays the information in a professional and interactive way to different stakeholders.

- Reduce costs: Some other great benefit is to reduce costs. With the assist of advanced technologies such as predictive analytics, businesses can spot improvement opportunities, trends, and patterns in their data and plan their strategies accordingly. In fourth dimension, this will help you save money and resource on implementing the wrong strategies. And not just that, by predicting unlike scenarios such every bit sales and demand yous can also anticipate product and supply.

- Target customers meliorate: Customers are arguably the well-nigh crucial element in any business. By using analytics to become a 360° vision of all aspects related to your customers, y'all can sympathize which channels they use to communicate with you, their demographics, interests, habits, purchasing behaviors, and more than. In the long run, it volition drive success to your marketing strategies, allow you to identify new potential customers, and avoid wasting resources on targeting the wrong people or sending the wrong message. Y'all can besides rails customer satisfaction by analyzing your client's reviews or your customer service department'south performance.

What Is The Data Assay Procedure?

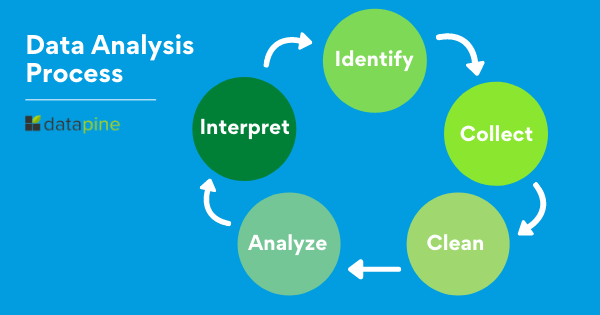

When we talk well-nigh analyzing data there is an order to follow in social club to extract the needed conclusions. The analysis process consists of 5 primal stages. We will cover each of them more in detail afterward in the post, but to start providing the needed context to understand what is coming side by side, hither is a rundown of the 5 essential steps of data analysis.

- Place: Before you lot become your hands muddy with data, y'all offset need to identify why do you lot need it in the kickoff place. The identification is the stage in which you lot institute the questions you will need to answer. For example, what is the customer's perception of our make? Or what type of packaging is more engaging to our potential customers? In one case the questions are outlined you are ready for the next step.

- Collect: As its proper name suggests, this is the stage where y'all beginning collecting the needed data. Hither, you ascertain which sources of information you will use and how you lot will use them. The drove of data tin come up in different forms such every bit internal or external sources, surveys, interviews, questionnaires, focus groups, among others. An important note here is that the way you collect the information will be different in a quantitative and qualitative scenario.

- Clean: Once you lot have the necessary data it is time to clean it and leave it fix for analysis. Not all the information yous collect volition be useful, when collecting big amounts of information in different formats it is very likely that you will discover yourself with duplicate or badly formatted data. To avoid this, before you start working with your data you need to make sure to erase any white spaces, duplicate records, or formatting errors. This style you avoid pain your analysis with wrong data.

- Analyze: With the assistance of various techniques such as statistical assay, regressions, neural networks, text analysis, and more, yous can showtime analyzing and manipulating your information to extract relevant conclusions. At this stage, yous detect trends, correlations, variations, and patterns that tin help yous answer the questions you get-go thought of in the identify stage. Diverse technologies in the market assists researchers and average concern users with the management of their data. Some of them include business intelligence and visualization software, predictive analytics, data mining, among others.

- Interprete: Last but not to the lowest degree you have one of the most important steps: it is time to translate your results. This stage is where the researcher comes upwardly with courses of activeness based on the findings. For example, here you would empathise if your clients prefer packaging that is red or light-green, plastic or paper, etc. Additionally, at this stage, you lot tin also observe some limitations and work on them.

Now that y'all have a basic understanding of these steps, let'due south look at the top 10 essential methods.

10 Essential Types Of Data Analysis Methods

Before diving into the seven essential types of methods, it is important that nosotros go over really fast through the principal analysis categories. Starting with the category of descriptive upwards to prescriptive analysis, the complexity and attempt of data evaluation increases, only also the added value for the company.

a) Descriptive assay - What happened.

The descriptive analysis method is the starting point to whatsoever analytic reflection, and information technology aims to answer the question of what happened? It does this past ordering, manipulating, and interpreting raw data from various sources to turn it into valuable insights for your organization.

Performing descriptive assay is essential, as information technology allows us to present our insights in a meaningful style. Although information technology is relevant to mention that this assay on its own volition non allow you to predict time to come outcomes or tell you the reply to questions like why something happened, information technology will leave your data organized and ready to acquit farther investigations.

b) Exploratory analysis - How to explore data relationships.

As its proper name suggests, the main aim of the exploratory analysis is to explore. Prior to it, there was nonetheless no notion of the relationship between the data and the variables. Once the information is investigated, the exploratory assay enables you to detect connections and generate hypotheses and solutions for specific problems. A typical surface area of application for it is data mining.

c) Diagnostic analysis - Why information technology happened.

Diagnostic information analytics empowers analysts and executives by helping them gain a firm contextual understanding of why something happened. If you know why something happened every bit well every bit how it happened, y'all will be able to pinpoint the verbal ways of tackling the issue or challenge.

Designed to provide direct and actionable answers to specific questions, this is 1 of the earth'southward most important methods in research, among its other cardinal organizational functions such as retail analytics, e.g.

c) Predictive analysis - What will happen.

The predictive method allows you to look into the future to answer the question: what will happen? In order to practise this, information technology uses the results of the previously mentioned descriptive, exploratory, and diagnostic analysis, in addition to automobile learning (ML) and artificial intelligence (AI). Like this, y'all tin uncover hereafter trends, potential problems or inefficiencies, connections, and casualties in your data.

With predictive assay, you can unfold and develop initiatives that will non only enhance your diverse operational processes but besides help you gain an all-of import edge on the competition. If you understand why a trend, design, or event happened through data, you lot volition be able to develop an informed projection of how things may unfold in particular areas of the business.

e) Prescriptive analysis - How will information technology happen.

Another of the near effective types of analysis methods in inquiry. Prescriptive data techniques cross over from predictive analysis in the style that information technology revolves effectually using patterns or trends to develop responsive, practical business strategies.

Past drilling downwards into prescriptive analysis, you lot volition play an active role in the data consumption process by taking well-arranged sets of visual data and using it as a powerful ready to emerging issues in a number of key areas, including marketing, sales, customer experience, Hour, fulfillment, finance, logistics analytics, and others.

Without further ado, hither are the 10 essential types of data assay methods amidst with some use cases in the business world:

1. Cluster assay

The action of group a set up of data elements in a way that said elements are more similar (in a particular sense) to each other than to those in other groups – hence the term 'cluster.' Since at that place is no target variable when clustering, the method is often used to detect hidden patterns in the data. The arroyo is also used to provide additional context to a trend or dataset.

Let'south wait at it from a business perspective. In a perfect world, marketers would exist able to analyze each client separately and give them the best-personalized service, but let'southward face information technology, with a large client base of operations, it is timely impossible to do that. That's where clustering comes in. Past group customers into clusters based on demographics, purchasing behaviors, monetary value, or whatever other factor that might exist relevant for your company, you will exist able to immediately optimize your efforts and give your customers the all-time experience based on their needs.

2. Cohort analysis

This type of information analysis method uses historical data to examine and compare a determined segment of users' behavior, which can then be grouped with others with like characteristics. Past using this methodology, it's possible to gain a wealth of insight into consumer needs or a firm agreement of a broader target group.

Cohort analysis can be really useful to perform analysis in marketing as it will allow yous to understand the impact of your campaigns on specific groups of customers. To exemplify, imagine you transport an e-mail campaign encouraging customers to sign up to your site. For this, you create two versions of the entrada with unlike designs, CTAs, and advertizing content. Later on, you can employ cohort analysis to track the operation of the campaign for a longer period of time and understand which blazon of content is driving your customers to sign up, repurchase, or engage in other means.

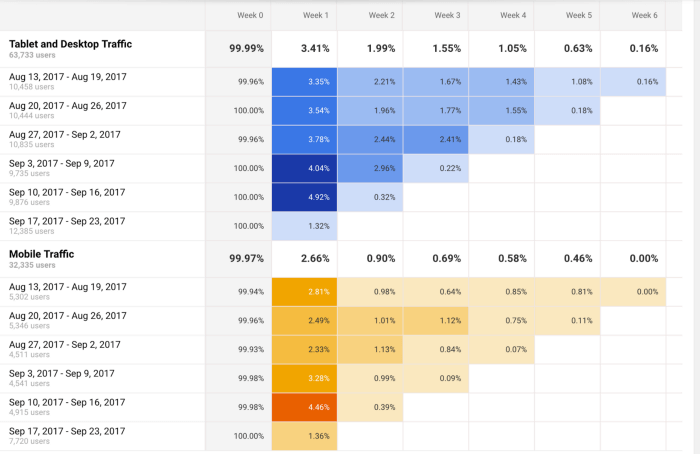

A useful tool to get-go performing cohort analysis method is Google Analytics. Yous tin can larn more near the benefits and limitations of using cohorts in GA in this useful guide. In the bottom image, you see an example of how you visualize a accomplice in this tool. The segments (devices traffic) are divided into appointment cohorts (usage of devices) so analyzed calendar week by week to extract insights into operation.

3. Regression analysis

Regression uses historical data to understand how a dependent variable'south value is affected when one (linear regression) or more contained variables (multiple regression) change or stay the same. By understanding each variable's relationship and how they developed in the by, you tin anticipate possible outcomes and make amend decisions in the hereafter.

Permit's bring it down with an example. Imagine yous did a regression assay of your sales in 2019 and discovered that variables like product quality, shop design, customer service, marketing campaigns, and sales channels affected the overall result. Now you desire to use regression to analyze which of these variables changed or if whatsoever new ones appeared during 2020. For example, y'all couldn't sell as much in your physical store due to COVID lockdowns. Therefore, your sales could've either dropped in general or increased in your online channels. Like this, you tin understand which independent variables affected the overall performance of your dependent variable, annual sales.

If yous want to go deeper into this blazon of analysis, bank check out this commodity and learn more than about how you can do good from regression.

iv. Neural networks

The neural network forms the footing for the intelligent algorithms of automobile learning. It is a form of analytics that attempts, with minimal intervention, to understand how the homo brain would generate insights and predict values. Neural networks larn from each and every data transaction, meaning that they evolve and advance over fourth dimension.

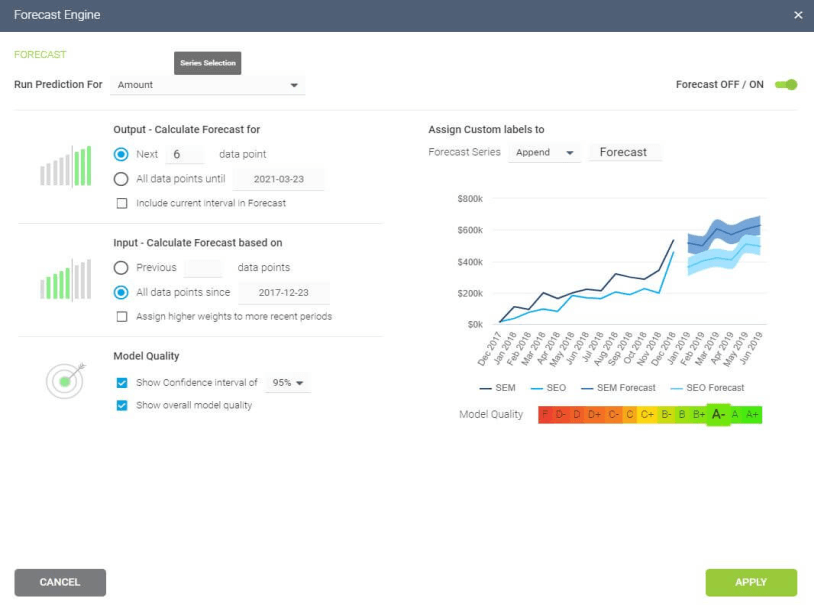

A typical area of application for neural networks is predictive analytics. There are BI reporting tools that have this feature implemented within them, such equally the Predictive Analytics Tool from datapine. This tool enables users to quickly and easily generate all kinds of predictions. All yous have to do is select the data to be candy based on your KPIs, and the software automatically calculates forecasts based on historical and electric current data. Cheers to its user-friendly interface, anyone in your organization can manage it; there'due south no need to be an advanced scientist.

Here is an example of how you tin use the predictive assay tool from datapine:

**click to enlarge**

5. Factor analysis

The cistron analysis also called "dimension reduction" is a type of data assay used to describe variability amongst observed, correlated variables in terms of a potentially lower number of unobserved variables called factors. The aim hither is to uncover independent latent variables, an platonic method for streamlining specific segments.

A good way to understand this data analysis method is a customer evaluation of a production. The initial assessment is based on dissimilar variables similar color, shape, wearability, current trends, materials, condolement, place where they bought the production, frequency of usage. Similar this, the list can be endless, depending on what yous want to track. In this example, factor assay comes to the moving picture by summarizing all of these variables into homogenous groups, for example, past group the variables colour, materials, quality, and trends into a blood brother latent variable of design.

If you lot want to start analyzing information using cistron assay we recommend you lot to accept a wait at this practical guide from UCLA.

6. Data mining

A method of data analysis that is the umbrella term for engineering metrics and insights for additional value, direction, and context. By using exploratory statistical evaluation, data mining aims to identify dependencies, relations, patterns, and trends to generate advanced noesis. When because how to analyze data, adopting a data mining mindset is essential to success - equally such, it's an area that is worth exploring in greater detail.

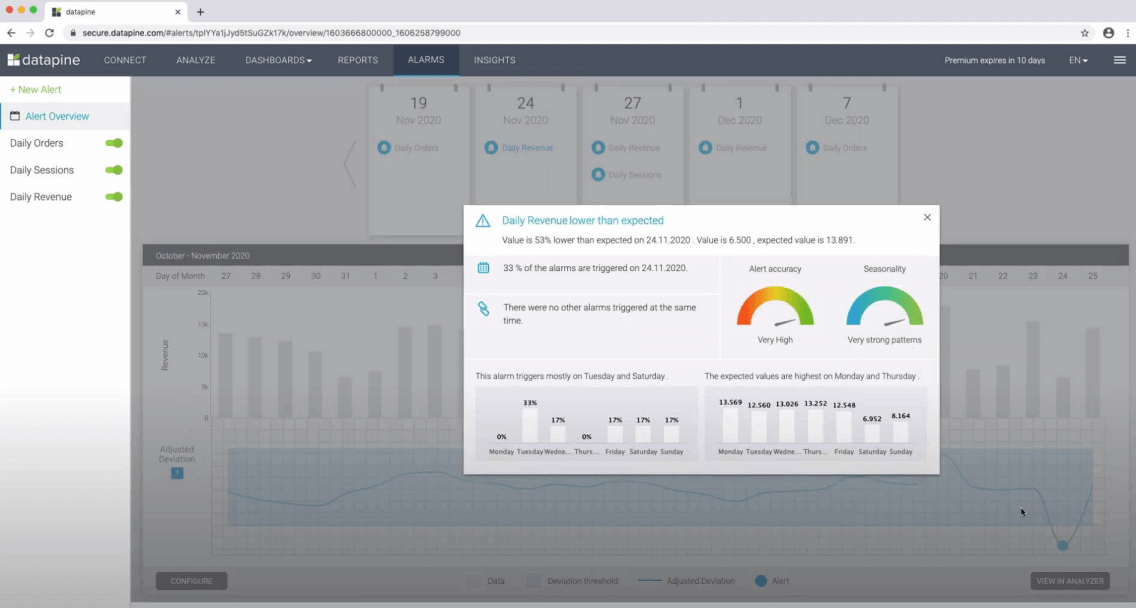

An excellent use case of data mining is datapine intelligent data alerts. With the help of artificial intelligence and auto learning, they provide automated signals based on particular commands or occurrences within a dataset. For case, if you lot're monitoring supply chain KPIs, you could set an intelligent alert to trigger when invalid or depression-quality data appears. By doing and so, you will be able to drill down deep into the issue and fix information technology swiftly and finer.

In the following picture, you can see how the intelligent alarms from datapine work. By setting upward ranges on daily orders, sessions, and revenues, the alarms will notify you lot if the goal was non completed or if information technology exceeded the expectations.

**click to enlarge**

7. Text analysis

Text assay, too known in the manufacture as text mining, works by taking large sets of textual information and arranging it in a way that makes it easier to manage. By working through this cleansing procedure in stringent particular, you volition be able to extract the data that is truly relevant to your organization and employ information technology to develop actionable insights that volition propel you forward.

Mod tools accelerate the application of text analytics. Thanks to the combination of automobile learning and intelligent algorithms, you can perform advanced belittling processes such every bit sentiment analysis. This technique allows you to understand the intentions and emotions of a text, for instance, if information technology's positive, negative, or neutral, then give information technology a score depending on certain factors and categories that are relevant for your brand. Sentiment analysis is oft used to monitor brand and production reputation and to empathise how successful your customer experience is. To acquire more about the topic check out this insightful commodity.

By analyzing information from various discussion-based sources, including production reviews, manufactures, social media communications, and survey responses, yous will gain invaluable insights into your audience, as well equally their needs, preferences, and pain points. This will allow you to create campaigns, services, and communications that run into your prospects' needs on a personal level, growing your audience while boosting customer retention.

viii. Fourth dimension series analysis

Equally its name suggests, the time series assay is used to analyze a set of data points collected over a specified period of time. Although analysts use this method to monitor the data points in a specific interval of time rather than just monitoring them intermittently, the time series analysis is not uniquely used with the purpose of collecting data over time. Instead, it allows researchers to understand if variables changed during the duration of the study, how the different variables are dependent, and how did information technology reach the end upshot.

In a business context, this method is used to understand the causes of different trends and patterns to extract valuable insights. Another way of using this method is with the help of fourth dimension series forecasting. Powered by predictive technologies, businesses can analyze various data sets over a period of fourth dimension and forecast different future events.

A great use case to put time series analysis into perspective is seasonality effects on sales. By using time series forecasting to analyze sales data of a specific product over time, you can understand if sales rising on a specific menstruum of time (e.g. swimwear during summertime, or candy during Halloween). These insights allow you to predict demand and prepare production accordingly.

nine. Decision Copse

The decision tree analysis aims to act as a support tool to make smart and strategic decisions. By visually displaying potential outcomes, consequences, and costs in a tree-similar model, researchers and business users can easily evaluate all factors involved and choose the all-time course of action. Decision copse are helpful to analyze quantitative data and they let for an improved decision-making process by helping you lot spot improvement opportunities, reduce costs, heighten operational efficiency and product.

Just how does a decision tree really works? This method works similar a flowchart that starts with the chief determination that you need to make and branches out based on the dissimilar outcomes and consequences of each determination. Each event will outline its own consequences, costs, and gains and, at the stop of the analysis, yous tin compare each of them and make the smartest conclusion.

Businesses can use them to empathize which project is more than cost-effective and will bring more earnings in the long run. For example, imagine you need to make up one's mind if you lot desire to update your software app or build a new app entirely. Hither you would compare the total costs, the time needed to be invested, potential revenue, and whatever other factor that might touch your decision. In the end, you would be able to encounter which of these two options is more realistic and attainable for your company or research.

10. Conjoint analysis

Concluding but not least, we accept the conjoint analysis. This approach is usually used in surveys to empathise how individuals value dissimilar attributes of a product or service and it is one of the most effective methods to excerpt consumer preferences. When it comes to purchasing, some clients might be more price-focused, others more features-focused, others might have a sustainable focus, whatever your customer'southward preferences are, you can find them with conjoint analysis. Like this, companies can ascertain pricing strategies, packaging options, subscription packages, and more.

A great example of conjoint assay is with marketing and sales. For instance, a cupcake brand might utilise conjoint assay and find that its clients adopt gluten-free options and cupcakes with healthier toppings over super sugary ones. Like this, the cupcake brand tin turn these insights into advertisements and promotions to increase sales on this detail type of product. And not only that, conjoint analysis tin likewise help businesses segment their customers based on their interests. This allows them to send different messaging that will bring value to each of the segments.

How To Analyze Information? Pinnacle 17 Information Analysis Techniques To Apply

Now that we've answered the questions "what is data assay'", why is it important, and covered the different data analysis types, it's time to dig deeper into how to perform your analysis by working through these 17 essential techniques.

1. Interact your needs

Earlier y'all brainstorm analyzing or drilling downwardly into any techniques, it'due south crucial to sit downward collaboratively with all primal stakeholders within your organization, decide on your master campaign or strategic goals, and gain a fundamental understanding of the types of insights that volition all-time benefit your progress or provide you with the level of vision yous need to evolve your arrangement.

2. Found your questions

In one case yous've outlined your core objectives, you should consider which questions will need answering to help you achieve your mission. This is 1 of the most of import techniques as it volition shape the very foundations of your success.

To help you enquire the right things and ensure your data works for you, you accept to ask the correct information analysis questions.

3. Data democratization

After giving your data analytics methodology some real management, and knowing which questions need answering to extract optimum value from the information available to your organization, you lot should continue with democratization.

Data democratization is an action that aims to connect data from diverse sources efficiently and quickly then that anyone in your organization can admission it at any given moment. You tin extract data in text, images, videos, numbers, or any other format. And and then perform cross-database assay to achieve more avant-garde insights to share with the rest of the visitor interactively.

One time you have decided on your most valuable sources, you need to accept all of this into a structured format to start collecting your insights. For this purpose, datapine offers an easy all-in-one information connectors characteristic to integrate all your internal and external sources and manage them at your will. Additionally, datapine's stop-to-finish solution automatically updates your data, assuasive you to relieve time and focus on performing the right analysis to abound your visitor.

4. Think of governance

When collecting data in a business organisation or inquiry context you always need to call up about security and privacy. With data breaches becoming a topic of concern for businesses, the need to protect your client'due south or subject'southward sensitive information becomes critical.

To ensure that all this is taken care of, y'all need to remember of a data governance strategy. According to Gartner, this concept refers to "the specification of decision rights and an accountability framework to ensure the appropriate behavior in the valuation, cosmos, consumption, and control of information and analytics." In simpler words, information governance is a collection of processes, roles, and policies, that ensure the efficient use of data while nonetheless achieving the primary company goals. It ensures that clear roles are in place for who can admission the data and how they tin can access information technology. In time, this non only ensures that sensitive information is protected but also allows for an efficient analysis as a whole.

5. Clean your data

After harvesting from and so many sources you volition exist left with a vast amount of information that can exist overwhelming to deal with. At the aforementioned time, you tin can be faced with wrong data that can exist misleading to your analysis. The smartest thing y'all can do to avert dealing with this in the future is to clean the data. This is fundamental before visualizing it, every bit it will ensure that the insights yous extract from it are correct.

In that location are many things that you need to expect for in the cleaning process. The most of import ane is to eliminate any duplicate observations; this usually appears when using multiple internal and external sources of data. Yous can also add any missing codes, fix empty fields, and eliminate incorrectly formatted data.

Another usual class of cleaning is done with text data. Equally we mentioned earlier, most companies today analyze customer reviews, social media comments, questionnaires, and several other text inputs. In order for algorithms to observe patterns, text information needs to be revised to avoid invalid characters or any syntax or spelling errors.

Most importantly, the aim of cleaning is to forbid you from arriving at false conclusions that can damage your business in the long run. By using clean data, you will likewise help BI tools to interact amend with your data and create better reports for your organization.

half-dozen. Fix your KPIs

Once yous've gear up your sources, cleaned your data, and established articulate-cut questions yous desire your insights to answer, you need to set up a host of central performance indicators (KPIs) that will help you lot rail, mensurate, and shape your progress in a number of key areas.

KPIs are disquisitional to both qualitative and quantitative assay enquiry. This is one of the primary methods of information analysis y'all certainly shouldn't overlook.

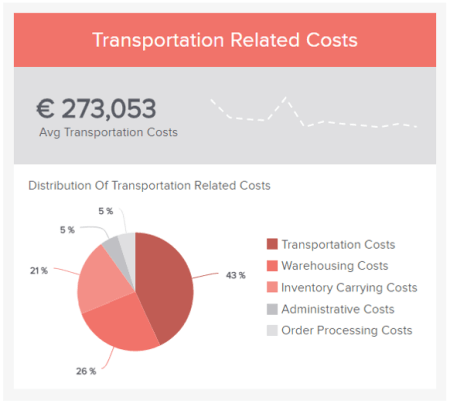

To aid yous prepare the best possible KPIs for your initiatives and activities, here is an example of a relevant logistics KPI: transportation-related costs. If yous want to meet more become explore our collection of key performance indicator examples.

vii. Omit useless data

Having bestowed your data analysis techniques and methods with true purpose and defined your mission, y'all should explore the raw information you've collected from all sources and utilize your KPIs every bit a reference for chopping out any information you lot deem to be useless.

Trimming the informational fatty is one of the most crucial methods of assay as it will allow you lot to focus your belittling efforts and clasp every drop of value from the remaining 'lean' data.

Any stats, facts, figures, or metrics that don't marshal with your business goals or fit with your KPI management strategies should be eliminated from the equation.

viii. Build a information management roadmap

While, at this betoken, this particular step is optional (you volition take already gained a wealth of insight and formed a fairly audio strategy by now), creating a data governance roadmap will help your data analysis methods and techniques become successful on a more sustainable basis. These roadmaps, if developed properly, are likewise congenital so they can exist tweaked and scaled over fourth dimension.

Invest aplenty fourth dimension in developing a roadmap that will help you store, manage, and handle your data internally, and you will make your analysis techniques all the more fluid and functional – one of the most powerful types of data analysis methods bachelor today.

9. Integrate technology

There are many ways to clarify data, but i of the most vital aspects of analytical success in a business concern context is integrating the correct conclusion support software and applied science.

Robust analysis platforms will not only let you to pull critical data from your most valuable sources while working with dynamic KPIs that will offering you lot actionable insights; it volition also present them in a digestible, visual, interactive format from one primal, alive dashboard. A data methodology you can count on.

By integrating the right technology within your data assay methodology, you'll avert fragmenting your insights, saving yous fourth dimension and endeavor while allowing yous to enjoy the maximum value from your business's nearly valuable insights.

For a await at the ability of software for the purpose of analysis and to enhance your methods of analyzing, glance over our pick of dashboard examples.

10. Respond your questions

By considering each of the above efforts, working with the right technology, and fostering a cohesive internal civilization where everyone buys into the different ways to clarify data also as the ability of digital intelligence, you will swiftly first to answer your most called-for business organization questions. Arguably, the best way to make your data concepts attainable across the organisation is through information visualization.

11. Visualize your data

Online data visualization is a powerful tool equally information technology lets you tell a story with your metrics, assuasive users beyond the organization to extract meaningful insights that aid business organisation evolution – and information technology covers all the different ways to clarify data.

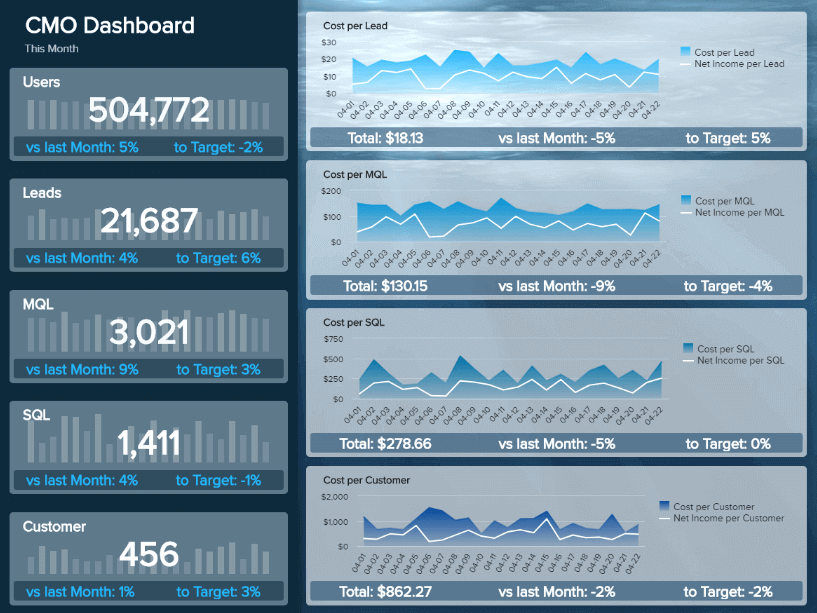

The purpose of analyzing is to make your entire arrangement more informed and intelligent, and with the right platform or dashboard, this is simpler than you think, every bit demonstrated by our marketing dashboard.

**click to enlarge**

Primary KPIs:

This visual, dynamic, and interactive online dashboard is designed to requite Chief Marketing Officers (CMO) an overview of relevant metrics to help them sympathize if they achieved their monthly goals.

In detail, this example generated with a modernistic dashboard creator displays interactive charts for monthly revenues, costs, net income, and net income per client; all of them are compared with the previous month so that you can sympathise how the data fluctuated. In addition, it shows a detailed summary of the number of users, customers, SQLs, and MQLs per month to visualize the whole picture and extract relevant insights or trends for your marketing reports.

The CMO dashboard is perfect for c-level management as it can help them monitor the strategic outcome of their marketing efforts and brand information-driven decisions that can do good the company exponentially.

12. Be careful with interpretation

We already dedicated an entire mail to data interpretation equally it is a fundamental part of the process of data assay. Information technology gives pregnant to the belittling information and aims to drive a concise decision from the analysis results. Since near of the time companies are dealing with data from many dissimilar sources, the interpretation phase needs to be done carefully and properly in lodge to avoid misinterpretations.

To aid y'all through the process, here we listing three common practices that you need to avert at all costs when looking at your information:

- Correlation vs. causation: The homo brain is formatted to notice patterns. This behavior leads to one of the nearly common mistakes when performing interpretation: confusing correlation with causation. Although these ii aspects can be simultaneously, it is not correct to assume that because two things happened together, 1 provoked the other. A slice of advice to avoid falling into this fault is never to trust just intuition, trust the data. If there is no objective evidence of causation, so e'er stick to correlation.

- Confirmation bias: This phenomenon describes the trend to select and interpret just the data necessary to prove i hypothesis, often ignoring the elements that might disprove information technology. Even if it'south not done on purpose, confirmation bias can stand for a existent problem, as excluding relevant information tin lead to false conclusions and, therefore, bad business decisions. To avert it, always endeavour to disprove your hypothesis instead of proving it, share your analysis with other team members, and avoid drawing any conclusions earlier the entire analytical project is finalized.

- Statistical significance: To put information technology in short words, statistical significance helps analysts understand if a consequence is really accurate or if it happened considering of a sampling fault or pure chance. The level of statistical significance needed might depend on the sample size and the industry being analyzed. In whatever example, ignoring the significance of a result when it might influence conclusion-making can exist a huge error.

thirteen. Build a narrative

Now, we're going to look at how you tin can bring all of these elements together in a way that will do good your business organisation - starting with a little something called data storytelling.

The human being brain responds incredibly well to strong stories or narratives. Once you've cleansed, shaped, and visualized your most invaluable data using various BI dashboard tools, you should strive to tell a story - 1 with a lucent offset, heart, and stop.

By doing so, you volition make your analytical efforts more than accessible, digestible, and universal, empowering more people within your organization to use your discoveries to their actionable advantage.

fourteen. Consider autonomous technology

Autonomous technologies, such every bit artificial intelligence (AI) and auto learning (ML), play a pregnant function in the advancement of agreement how to analyze data more effectively.

Gartner predicts that by the end of this twelvemonth, eighty% of emerging technologies will be adult with AI foundations. This is a testament to the ever-growing power and value of autonomous technologies.

At the moment, these technologies are revolutionizing the analysis industry. Some examples that nosotros mentioned earlier are neural networks, intelligent alarms, and sentiment analysis.

15. Share the load

If you work with the right tools and dashboards, you will be able to present your metrics in a digestible, value-driven format, allowing almost everyone in the system to connect with and use relevant data to their advantage.

Modern dashboards consolidate data from various sources, providing access to a wealth of insights in i centralized location, no thing if you demand to monitor recruitment metrics or generate reports that need to be sent across numerous departments. Moreover, these cutting-edge tools offering access to dashboards from a multitude of devices, meaning that everyone within the concern tin can connect with practical insights remotely - and share the load.

One time everyone is able to piece of work with a data-driven mindset, you will catalyze the success of your business concern in means yous never thought possible. And when it comes to knowing how to clarify information, this kind of collaborative approach is essential.

16. Data analysis tools

In order to perform loftier-quality assay of data, information technology is fundamental to employ tools and software that will ensure the best results. Here nosotros leave yous a pocket-sized summary of 4 fundamental categories of information analysis tools for your system.

- Business organisation Intelligence: BI tools let you lot to process significant amounts of data from several sources in any format. Similar this, yous tin can not only analyze and monitor your data to extract relevant insights but also create interactive reports and dashboards to visualize your KPIs and utilize them for your company's good. datapine is an amazing online BI software that is focused on delivering powerful online assay features that are attainable for beginner and advanced users. Similar this, it offers a total-service solution that includes cut-edge analysis of data, KPIs visualization, live dashboards, and reporting, likewise as artificial intelligence technologies to predict trends and minimize take a chance.

- Statistical analysis: These tools are usually designed for scientists, statisticians, marketplace researchers, and mathematicians, as they allow them to perform circuitous statistical analyses with methods similar regression analysis, predictive analysis, and statistical modeling. A good tool to perform this type of analysis is R-Studio as it offers a powerful data modeling and hypothesis testing feature that can embrace both academic and general data analysis. This tool is one of the favorite ones in the manufacture, due to its adequacy for data cleaning, data reduction, and performing advanced analysis with several statistical methods. Another relevant tool to mention is SPSS from IBM. The software offers avant-garde statistical analysis for users of all skill levels. Cheers to a vast library of automobile learning algorithms, text analysis, and a hypothesis testing approach it can assist your visitor find relevant insights to bulldoze ameliorate decisions. SPSS as well works as a cloud service that enables you to run it anywhere.

- SQL Consoles: SQL is a programming linguistic communication often used to handle structured data in relational databases. Tools like these ones are popular amid data scientists as they are extremely effective to unlock the value of these databases. Without a doubt, i of the almost used SQL software's in the market is MySQL Workbench This tool offers several features such every bit a visual tool for database modeling and monitoring, complete SQL optimization, forth with administration tools, and visual performance dashboards to keep track of KPIs.

- Data Visualization: These tools are used to represent your data through charts, graphs, and maps that allow you to detect patterns and trends in the information. datapine'due south already mentioned BI platform as well offers a wealth of powerful online data visualization tools with several benefits. Some of them include: delivering compelling information-driven presentations to share with your entire company, the ability to run across your data online with any device wherever you are, an interactive dashboard design feature that enables you to showcase your results in an interactive and understandable way, and to perform online self-service reports that can be used simultaneously with several other people to enhance team productivity.

17. Refine your process constantly

Last is a step that might seem obvious to some people, but it can be easily ignored if you think your are washed. Once you have extracted the needed results, yous should ever take a retrospective wait at your project and think most what you tin can amend. As you saw throughout this long list of techniques, information analysis is a circuitous procedure that requires constant refinement. For this reason, you lot should always go one stride further and continue improving.

Quality Criteria For Data Analysis

So far we've covered a list of methods and techniques that should help you perform efficient information analysis. But how practice you lot measure the quality and validity of your results? This is washed with the assistance of some scientific discipline quality criteria. Here we will go into a more theoretical area that is disquisitional to understanding the fundamentals of statistical analysis in science. However, you lot should also be aware of these steps in a business context, as they will let you to assess the quality of your results in a correct manner. Permit's dig in.

- Internal validity: The results of a survey are internally valid if they measure what they are supposed to measure out and thus provide credible results. In other words, internal validity measures the trustworthiness of the results and how they can be affected by factors such as the research design, operational definitions, how the variables are measured, and more than. For instance, imagine y'all are doing an interview to inquire people if they brush their teeth two times a day. While nearly of them will respond yes, you can yet notice that their answers correspond to what is socially adequate, which is to brush your teeth at least twice a day. In this case, you can't exist 100% sure if respondents really castor their teeth twice a day or they only say that they practice, therefore, the internal validity of this interview is very low.

- External validity: Essentially, external validity refers to the extent to which the results of your research tin exist applied to a broader context. It basically aims to bear witness that the findings of a study can exist applied in the real world. If the research tin be applied to other settings, individuals, and times, so the external validity is high.

- Reliability: If your research is reliable, it means that it can be reproduced. If your measurement were repeated under the same conditions, it would produce similar results. This means that your measuring instrument consistently produces reliable results. For example, imagine a doctor builds a symptoms questionnaire to notice a specific illness on a patient. Then, various other doctors use this questionnaire merely stop upward diagnosing the aforementioned patient with a dissimilar condition. This means the questionnaire is not reliable to detect the initial disease. Some other important note here is that in order for your research to be reliable, information technology also needs to be objective. If the results of a report are the same, independent of who assesses them or interprets them, the study can exist considered reliable. Let's come across the objectivity criteria more in particular at present.

- Objectivity: In data scientific discipline, objectivity means that the researcher needs to stay fully objective when it comes to its analysis. The results of a report need to exist afflicted by objective criteria and not by the beliefs, personality, or values of the researcher. Objectivity needs to exist ensured when you are gathering the data, for instance, when interviewing individuals, the questions need to be asked in a way that doesn't influence the results. Paired to this, objectivity also needs to be thought of when interpreting the data. If unlike researchers reach the same conclusions, then the study is objective. For this last point, you tin can gear up predefined criteria to interpret the results to ensure all researchers follow the same steps.

The discussed quality criteria cover generally potential influences in a quantitative context. Assay in qualitative research take past default additional subjective influences that must be controlled in a different way. Therefore, there are other quality criteria for this kind of research such as brownie, transferability, dependability, and confirmability. You can run across each of them more in particular on this resource.

Information Analysis Limitations & Barriers

Analyzing information is not an easy task. As you've seen throughout this mail, there are many steps and techniques that you need to apply in order to extract useful information from your research. While a well-performed analysis can bring various benefits to your organization it doesn't come without limitations. In this section, we volition discuss some of the master barriers yous might encounter when conducting an analysis. Allow's see them more than in detail.

- Objectivity: Arguably one of the biggest barriers when information technology comes to information analysis in research is to stay objective. When trying to evidence a hypothesis, researchers might find themselves, intentionally or unintentionally, directing the results towards an issue that they want. To avert this, always question your assumptions and avert confusing facts with opinions. You can also testify your findings to a research partner or external person to confirm that your results are objective.

- Flawed correlation: Misleading statistics can significantly damage your enquiry. We've already pointed out a few interpretation problems previously on the mail, merely information technology is an important bulwark that nosotros can't avoid addressing hither as well. Flawed correlations occur when two variables appear related to each other simply they are non. Confusing correlations with causation tin can lead to a wrong interpretation of results which tin atomic number 82 to building incorrect strategies and loss of resource, therefore, information technology is very of import to place the different interpretation mistakes and avoid them.

- Privacy concerns: In some cases, data collection can be subjected to privacy regulations. Businesses assemble all kinds of data from their customers from purchasing behaviors to addresses and phone numbers. If this falls into the wrong easily due to a alienation, information technology can touch on the security and confidentiality of your clients. To avoid this effect, you lot need to collect only the information that is needed for your research and, if you are using sensitive facts, make information technology anonymous so customers are protected. The misuse of customer data can severely damage a business'south reputation, so it is important to continue an centre on privacy.

- Lack of communication between teams: When it comes to performing data analysis on a business concern level, it is very likely that each department and team volition accept dissimilar goals and strategies. Yet, they are all working for the same common goal of helping the business run smoothly and proceed growing. When teams are non continued and communicating with each other, it can direct impact the way full general strategies are built. To avoid these issues, tools such as data dashboards enable teams to stay connected through data in a visually highly-seasoned way.

- Innumeracy: Businesses are working with data more and more than every twenty-four hours. While in that location are many BI tools available to perform effective assay, information literacy is still a constant barrier. Non all employees know how to apply analysis techniques or excerpt insights from them. To forestall this from happening, you can implement different training opportunities that will prepare every relevant user to deal with data.

Data Analysis In The Big Data Environs

Big information is invaluable to today'due south businesses, and past using different methods for data analysis, information technology's possible to view your information in a way that can help you lot turn insight into positive action.

To inspire your efforts and put the importance of big data into context, here are some insights that you lot should know:

- Past 2023 the industry of large data is expected to be worth approximately $77 billion.

- 94% of enterprises say that analyzing information is important for their growth and digital transformation.

- Companies that exploit the full potential of their data can increase their operating margins by 60%.

- We already told you the benefits of Bogus Intelligence through this article. This industry'south fiscal affect is expected to grow upwardly to $40 billion by 2025.

Data assay concepts may come in many forms, but fundamentally, any solid methodology volition help to make your business more streamlined, cohesive, insightful, and successful than e'er before.

Key Takeaways From Data Analysis

Every bit we achieve the end of our data analysis journey, we get out a pocket-size summary of the main methods and techniques to perform excellent analysis and grow your concern.

10 Essential Types of Data Assay Methods:

- Cluster assay

- Cohort analysis

- Regression analysis

- Factor analysis

- Neural Networks

- Information Mining

- Text analysis

- Fourth dimension series analysis

- Decision trees

- Conjoint analysis

Top 17 Data Analysis Techniques:

- Interact your needs

- Establish your questions

- Data democratization

- Recollect of data governance

- Clean your data

- Set your KPIs

- Omit useless data

- Build a data management roadmap

- Integrate technology

- Respond your questions

- Visualize your data

- Interpretation of data

- Consider democratic technology

- Build a narrative

- Share the load

- Data Analysis tools

- Refine your process constantly

Nosotros've pondered the data assay definition and drilled down into the applied applications of data-centric analytics, and one thing is clear: by taking measures to arrange your information and making your metrics work for you, it's possible to transform raw data into action - the kind of that volition push your business organization to the side by side level.

Yes, good data analytics techniques result in enhanced business intelligence (BI). To help you lot empathise this notion in more than detail, read our exploration of business intelligence reporting.

And, if you're ready to perform your own analysis, drill down into your facts and figures while interacting with your information on amazing visuals, you can try our software for a free, 14-twenty-four hours trial.

Source: https://www.datapine.com/blog/data-analysis-methods-and-techniques/

0 Response to "what is important to remember when analyzing data in research"

Postar um comentário